Learning Spark Parquet vs CSV Performance Differences

Handling and managing large datasets is often a hassling task. Users often wonder which format to work with for better data processing and storage aspects. The most common comparison while working with datasets is Apache Spark Parquet vs CSV performance. This article explains the differences between the two and explains why users often choose one over the other.

Let’s begin by understanding the two formats first and then move to the differences.

What is Parquet File Format? Overview

Parquet is a columnar storage file format that is specifically designed for large data processing frameworks like Apache Spark and Hadoop. Unlike other storage formats, this file format stores the data by columns, making it optimal for analytical workloads and large-scale data. Below are some of the features of the Parquet files:

- Stores the data column-wise and allows Spark to process and read only the needed columns instead of the entire dataset.

- Offers advanced compression and encoding techniques for the dataset management and helps reduce the storage space.

- Optimized first for query performance and data analytics, mainly for read-heavy operations.

- Seamless performance on various big data environments such as Hadoop, Spark, and other data warehouses.

These are some of the core capabilities of the Parquet file format. Now, to proceed with the Spark Parquet vs CSV performance differences, let’s take a look at what the CSV file format is. This will make it easier for the users to be aware of the basic differences between the two.

More About CSV File Format – Uses and Features

The CSV file format(Comma-Separated Values) is a plain-text format to store data in a row-based structure. The lines in the files represent a record, and the individual values are separated by delimiters such as commas. Here are the features of the CSV file format:

- The data is stored row-wise and is human-readable. This makes it easier for the users to open and read or edit data as per their needs.

- This is a widely supported file format among users and is easy to share among different platforms, databases, and data processing tools.

- CSV is one of the ideal file formats for data exchanges, small datasets, and instant import and export processes.

- This file format doesn’t have built-in optimization and compression features. This often leads to a larger file size as compared to the Parquet file format.

These are the features and factors of CSV files. As we can see, there are significant differences between the two formats, which makes the Parquet vs CSV performance comparison a bit more interesting. Let’s now take a look at the scenarios to understand which format is ideal for which situation.

Need to Change Parquet Files to CSV Format? Here’s the Quick Way!

There are often situations where users need to convert Parquet files to various other formats, such as CSV. There are not many methods available for them, and the possible methods require technical expertise. In such cases, it becomes optimal for the users to go with a professional solution, like FreeViewer Parquet Converter Tool, a utility that offers various file formats for .parquet conversion.

With the help of this tool, users can easily go through the conversion process without risking data integrity and structure.

Spark Parquet vs CSV Performance: Choosing the Right Format

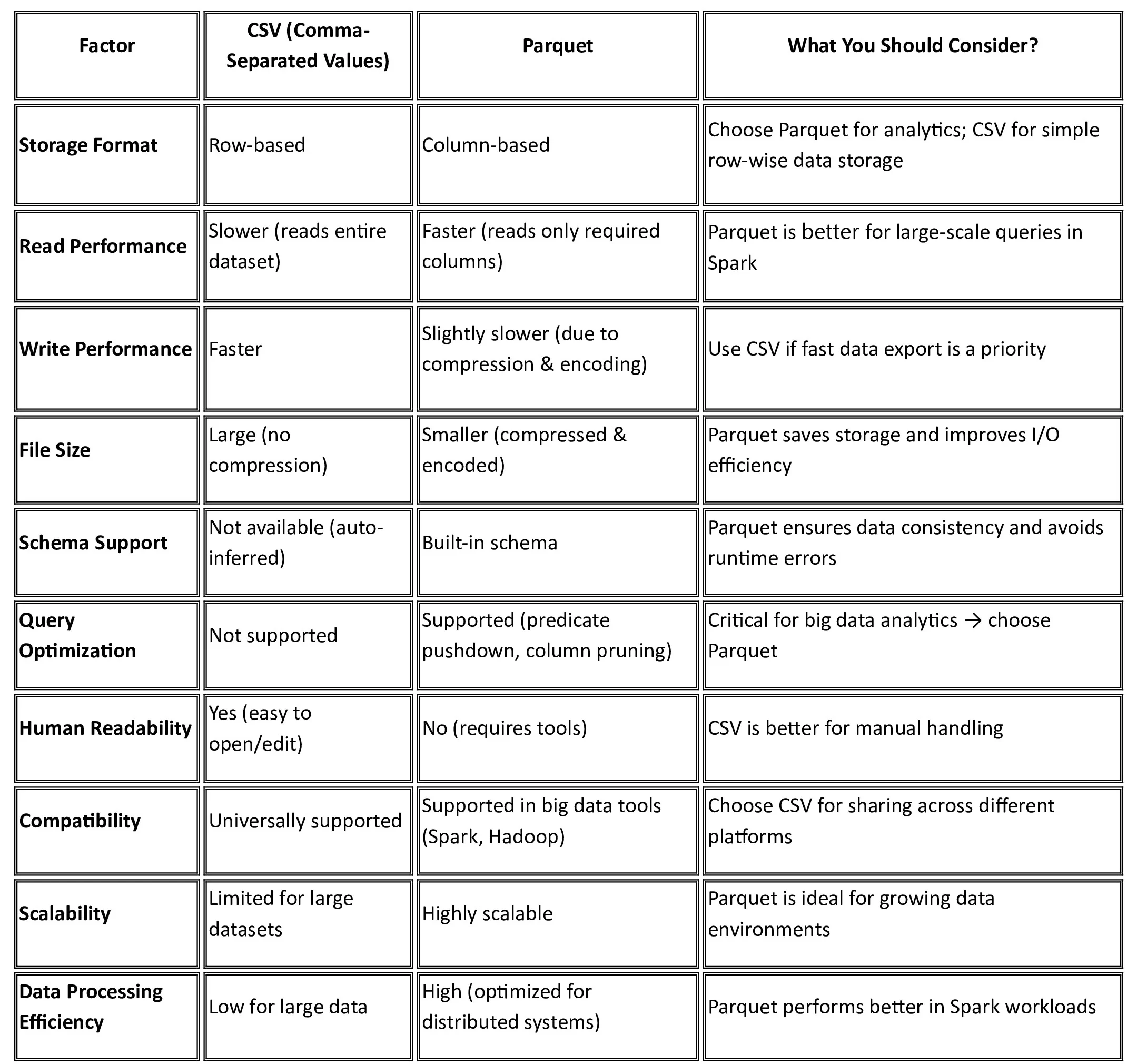

We will now take a look at various factors that will help us understand the capabilities of these file formats that make them ideal for data processing and data storage. Let’s now take a look at different factors for differentiation between Parquet and CSV:

Storage Structure of the File:

- The CSV files store data in a row-based structure where all the records are stored line by line.

- Parquet, on the other hand, stores data in a columnar storage structure and stores the data column-wise.

Parquet vs CSV Read Performance

- The CSV files read the complete rows even if not all columns are needed at the moment.

- Parquet reads only the columns that are required at that time, further offering faster read performance than CSV.

Parquet File vs CSV File Size Comparison

- The CSV files are comparatively larger in size, as there are no compression mechanisms available.

- Parquet files are much smaller in size than CSV files, as they have built-in compression techniques.

Data Processing with Parquet and CSV

- CSV files are not efficient for large-scale data processing.

- The Parquet files are generally optimized for distributed data processing frameworks like Hadoop and Spark.

These are some of the factors that specify how the files store data and how efficient they are in various aspects. We will now move to a detailed table of comparison that can help users understand the differences between the formats and the right choice when they need to store and manage data.

Conclusion

With the help of this article, we have learned about the Spark Parquet vs CSV performance differences. We have learned how they both have different structures to save data and records. We have also discussed different aspects of the file format and in which situations they are the ideal choice. To make the comparison easier for users, we have also provided a table with clear differences.